Holt's Method and Marine CO2 Surface Levels

Double Exponential Smoothing (DES), also known as Holt's method, is a forecasting model that tracks an underlying trend in a time series. It builds on the concepts laid out in the component form of Simple Exponential Smoothing. In this tutorial, we will run through an example of how to perform Holt's method and see a Python implementation, then apply the model to CO2 data supplied by the National Oceanic and Atmospheric Administration.

An Easy Example

We have seen how SES can be used to smooth a time series. DES will also accomplish this, but with the added ability to follow an underlying trend. Consider the table of sales below.

| TIME (t) | SALES ($y_t$) |

|---|---|

| 1 | 20 |

| 2 | 24 |

| 3 | 26 |

| 4 | 32 |

| 5 | 33 |

We will run through the equations needed to perform DES on this time series, but we first need to define some terms.

Level and Trend

Double Exponential Smoothing will break a series into two components - the "level" and the "trend". The trend is the estimated gradient at any given point, and the level is the value when this trend component is removed. The level and trend will be denoted by $\ell_t$ and $b_t$ respectively.

To begin smoothing, we first need initial values for $\ell_t$ and $b_t$. There's many ways to initialise these values, a simple way is to set $\ell_2 = y_1$ and $b_t$ to be the difference between $y_1$ and $y_2$, ie $24-20=4$. Later on we'll look at using linear regression to obtain an estimate, for now we'll fill in the table with these values.

| TIME (t) | SALES ($y_t$) | LEVEL ($\ell_t$) | TREND ($b_t$) |

|---|---|---|---|

| 1 | 20 | ||

| 2 | 24 | 20.00 | 4.00 |

| 3 | 26 | ||

| 4 | 32 | ||

| 5 | 33 |

The Smoothing Equations

The two equations we will be implementing are as follows.

$$\ell_t = \alpha y_t + (1 - \alpha)(\ell_{t-1} + b_{t-1})$$ $$b_t = \beta(\ell_t - \ell_{t-1}) + (1 - \beta)b_{t-1}$$The formula for calculating the level, $\ell_t$, is similar to the one used in SES but with the addition of the trend component, $b_t$. At each step we will now update the trend component which requires its own smoothing parameter, $\beta$. So we will have two smoothing parameters, alpha and beta. Let's set $\alpha = 0.2$ and $\beta = 0.1$, and we can go ahead and calculate $\ell_3$

$$\ell_t = \alpha y_t + (1 - \alpha)(\ell_{t-1} + b_{t-1})$$ $$\ell_3 = 0.2(26) + (1 - 0.2)(24 + 4)$$$$\ell_3 = 27.60$$Similarly, we can calculate the trend component, $b_3$

$$b_t = \beta(\ell_t - \ell_{t-1}) + (1 - \beta)b_{t-1}$$$$b_3 = 0.1(27.6 - 24.0) + (1 - 0.1)4.0$$$$b_3 = 3.96$$This process of calculating the level and trend can be repeated so the rest of the table can be filled.

| TIME (t) | SALES ($y_t$) | LEVEL ($\ell_t$) | TREND ($b_t$) |

|---|---|---|---|

| 1 | 20 | ||

| 2 | 24 | 24.00 | 4.00 |

| 3 | 26 | 27.60 | 3.96 |

| 4 | 32 | 31.65 | 3.97 |

| 5 | 33 | 35.09 | 3.92 |

Making a Forecast

To get the forecast at each step, we add the level and trend together from the previous step. We can only start at t=3 due to the level and trend beginning at t=2.

$$Forecast, \hat{y}_{t} = l_{t-1} + b_{t-1}$$| TIME (t) | SALES ($y_t$) | LEVEL ($\ell_t$) | TREND ($b_t$) | FORECAST ($\hat{y}$) |

|---|---|---|---|---|

| 1 | 20 | |||

| 2 | 24 | 24.00 | 4.00 | |

| 3 | 26 | 27.60 | 3.96 | 28.00 |

| 4 | 32 | 31.65 | 3.97 | 31.56 |

| 5 | 33 | 35.09 | 3.92 | 35.62 |

If we want to forecast into the future, we can do so by using the last value for the level and adding h steps of the trend.

$$\hat{y}_{t+h} = \ell_{t-1} + h * b_{t-1}$$For example, if we want to forecast $y_9$ (which would make t=5, h=4), we calculate

$$\begin{aligned} \hat{y}_9 &= \ell_{5} + 4 * b_{5} \\ \hat{y}_9 &= 35.09 + 4 * 3.92 \\ \hat{y}_9 &= 50.77 \\ \end{aligned}$$CO2 Surface Level Data

To run through a Python implementation, we'll use data supplied by the National Oceanic and Atmospheric Administration (NOAA). The particular dataset will be the annual mean of globally averaged marine CO2 surface levels, which can be downloaded here.

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

# Import our data

co2 = pd.read_csv('data/co2_annmean_gl.csv', skiprows=55)

# Plot data

plt.figure(figsize=(12,4))

plt.plot(co2['year'],co2['mean'])

plt.title("Globally Averaged Marine Surface Annual Mean")

plt.ylabel("CO2 (ppm)")

plt.xlabel("Year")

plt.show()

Initialising Level and Trend with Linear Regression

If we were to initialise the level and trend as before, the beginning of our table would look like the following.

| TIME (t) | YEAR | CO2 (ppm) | LEVEL | TREND | FORECAST |

|---|---|---|---|---|---|

| 1 | 1980 | 338.91 | |||

| 2 | 1981 | 340.1 | 340.1 | 1.19 | |

| 3 | 1982 | 340.86 | 341.29 | ||

| 4 | 1983 | 342.53 |

It would be nice if we could create the smoothed series beginning from t=1. One approach is to use linear regression to find the line of best fit to get an estimated level and trend for t=0. You can use your favourite linear regression model, I'm going to use the function from the Moore-Penrose Inverse tutorial.

def lss(A: np.ndarray, b: np.ndarray) -> np.ndarray:

"""

Least squares solution for the linear system Ax=b.

Returns an array of the coefficients, the first value

is the intercept/bias.

"""

if not all(isinstance(obj, np.ndarray) for obj in [A,b]):

return 'Arguments must be of type np.ndarray'

if A.ndim == 1:

A = A.reshape(-1,1)

A_ = np.append(np.ones((A.shape[0],1)), A, axis=1)

return np.linalg.pinv(A_) @ b

We'll use the first 10 measurements in the CO2 dataset and find the line of best fit.

i = 10

intercept, grad = lss(np.arange(1,i+1), co2.loc[:i-1,'mean'].values)

print(f'Intercept: {intercept:.3f}, gradient: {grad:.3f}')

# Plot the line of best fit

x = np.arange(i+1)

plt.figure(figsize=(12,4))

plt.scatter(x[1:],co2.loc[:i-1,'mean'])

plt.plot(x, intercept + grad*x, linestyle='--', color='black')

plt.title("Globally Averaged Marine Surface Annual Mean")

plt.xticks(x)

plt.ylabel("CO2 (ppm)")

plt.xlabel("Time (t)")

plt.show()

If we fill in the table with the intercept and gradient for t=0, forecasting can begin from t=1.

| TIME (t) | YEAR | CO2 (ppm) | LEVEL | TREND | FORECAST |

|---|---|---|---|---|---|

| 0 | 336.622 | 1.552 | |||

| 1 | 1980 | 338.91 | 338.174 | ||

| 2 | 1981 | 340.1 | |||

| 3 | 1982 | 340.86 |

Changing the Frame of Reference

To implement Holt's method elegantly, we'll change the frame of reference. We're going to shift some of the columns up, which will allow us to calculate the forecast column by simply adding the level and trend columns.

| TIME (t) | YEAR | CO2 (ppm) | LEVEL | TREND | FORECAST |

|---|---|---|---|---|---|

| 0 | $\uparrow$ | $\uparrow$ | 336.622 | 1.552 | $\uparrow$ |

| 1 | 1980 | 338.91 | 338.174 | ||

| 2 | 1981 | 340.1 | |||

| 3 | 1982 | 340.86 |

| TIME (t) | YEAR | CO2 (ppm) | LEVEL | TREND | FORECAST |

|---|---|---|---|---|---|

| 0 | 1980 | 338.91 | 336.622 | 1.552 | 338.174 |

| 1 | 1981 | 340.1 | |||

| 2 | 1982 | 340.86 |

With this change of reference, the formulas will change slightly.

$$\ell_t = \alpha y_{t-1} + (1 - \alpha)(\ell_{t-1} + b_{t-1})$$ $$b_t = \beta(\ell_t - \ell_{t-1}) + (1 - \beta)b_{t-1}$$$$\hat{y}_{t} = l_t + b_t$$The function below will take a time-series and perform double exponential smoothing using the parameters alpha and beta for the smoothing factors. It will use the final values for the level and trend to make a prediction h steps into the future.

def dbl_exp_smoothing(ts, alpha, beta, h):

"""

Perform Double Exponential Smoothing on a time-series

Parameters

----------

ts : np.ndarray

One-dimensional time-series

alpha : float

Smoothing factor for the level, with `0 < alpha <= 1`

beta : float

Smoothing factor for the trend, with `0 < beta <= 1`

h : int

Number of steps into the future to forcast, `h >= 0`

Returns

-------

forecast : np.ndarray

One-dimensional array of smoothed series with a forecast

h-steps into the future

"""

n = len(ts)

lvl = np.zeros(n)

b = np.zeros(n)

i = min(n, 10) # Number of starting points <10 to use for grad/intercept estimation

lvl[0], b[0] = lss(np.arange(1,i+1), ts[:i]) # Grad/interecept estimation

for i in range(1,n):

lvl[i] = alpha*ts[i-1] + (1 - alpha)*(lvl[i-1] + b[i-1])

b[i] = beta*(lvl[i]-lvl[i-1]) + (1 - beta)*b[i-1]

forecast = lvl + b

if h:

pred = np.zeros(h)

for i in range(1,h+1):

pred[i-1] = forecast[-1] + i * b[-1]

forecast = np.append(forecast, pred)

return forecast

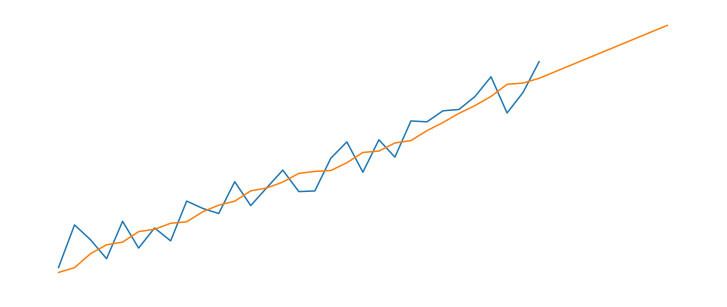

We can now pass the CO2 time-series into the double_exp_smoothing function to return the forecast. For this initial attempt, we'll set h=0, which means the forecast will be "in-sample" only.

ts = co2['mean'].values

forecast = dbl_exp_smoothing(ts, alpha=0.2, beta=0.1, h=0)

# Plot results

plt.figure(figsize=(12,4))

plt.plot(co2['year'], ts, label="Actual")

plt.plot(co2['year'], forecast, linestyle='--', label="Forecast")

plt.xlabel('Year')

plt.ylabel('CO2 (ppm)')

plt.legend()

plt.show()

The result looks okay, though the forecast appears to be consistently under-estimating the series for around the year 2000 onwards. Perhaps we can improve the fit by optimising the smoothing factors.

Optimising Alpha and Beta

Below are the functions required for optimising the values for alpha and beta. The fit() function takes the time series, intialises starting values for the level and trend, then uses scipy.optimize.minimize to find the the optimal values for params (ie, alpha and beta) by minimising the des_error function. The des_error function constructs a smoothed series and returns the squared euclidean between the smoothed series and the actual series.

from scipy.spatial.distance import sqeuclidean

from scipy.optimize import minimize

def des_error(params, ts, lvl, b, n):

alpha, beta = params

for i in range(1,n):

lvl[i] = alpha*ts[i-1] + (1 - alpha)*(lvl[i-1] + b[i-1])

b[i] = beta*(lvl[i]-lvl[i-1]) + (1 - beta)*b[i-1]

return sqeuclidean(lvl+b, ts)

def fit(ts):

n = len(ts)

lvl = np.zeros(n)

b = np.zeros(n)

i = min(10, n) # Number of data points for grad/intercept estimation

lvl[0], b[0] = lss(np.arange(1,i+1), ts[:i])

alpha_init = 0.2

beta_init = 0.1

params = [alpha_init, beta_init]

bnds = [(0,1),(0,1)]

args = (ts,lvl,b,n)

optimised = minimize(des_error, params, args=args,

bounds=bnds)

return optimised.x

opt = fit(co2['mean'].values)

print('Optimal smoothing factors')

print(f'Alpha: {opt[0]:.3f}\nBeta: {opt[1]:.3f}')

Making a Prediction

We can now use the smoothing factors, stored in opt, to make a final prediction into the future. I'll set m=20, which will take the forecast to the year 2040.

h = 20 # Number of steps to predict into the future

ts = co2['mean'].values

forecast = dbl_exp_smoothing(ts, alpha=opt[0], beta=opt[1], h=h)

yr_init = co2.loc[0,'year']

# Plot results

plt.figure(figsize=(12,4))

plt.plot(co2['year'], ts, label="Actual")

plt.plot(np.arange(yr_init,yr_init+len(ts)+h), forecast,

linestyle='--', label="Forecast")

plt.xlabel('Year')

plt.ylabel('CO2 (ppm)')

plt.legend()

plt.show()

print(f'Year 2040 forecast: {forecast[-1]:.2f} ppm')

The fit appears a lot better. Making a prediction 20 years into future gives us a CO2 value of 459.76ppm. We could continue to extrapolate the forecast, but this is going to continue on in a linear fashion to infinity, which is impossible. The next step would be to introduce a dampening factor, which would plateau the forecast. Perhaps that will be explored in a future tutorial.

We'll instead turn our attention to Triple Exponential Smoothing, which will add a seasonal component into the model.